It was one of those days when everything that could go wrong did.

First, my dog decided to take a dump in my shoes. Then I knocked over my cup and splashed coffee onto my new shirt. I almost expected a flat tire, but God gave me bumper-to-bumper traffic on the highway instead.

I was SOOO late that I had to sprint to the office. In my haste, I stumbled on the uneven payment and dropped my phone, shattering the screen.

Could my day get ANY worse?

It did!

The email campaign I was so sure would pull off impressive results totally flopped, and I was not the least bit happy.

But that was a dozen years ago when I was just stepping into the world of digital marketing. I’m more experienced now, and there are more tools to help with various aspects of marketing.

In total honesty, I do take the blame for that day – not the morning fiasco but the failed email marketing campaign! A/B testing, to a certain extent, could have prevented that from happening.

That’s why 59% of companies test their email campaigns. And it makes sense too. After all, no matter what type of online business they run (software, ecommerce, content sites, etc.), they will have to use emails in their marketing efforts.

If you haven’t joined this statistic yet and want to learn about it, I can help. I’ll share my experiences and help you understand what A/B testing is, show you what you should be testing, and discuss best practices to ensure you improve your email marketing campaigns.

Ready to get started?

What is A/B Testing?

Email A/B testing is also referred to as email split testing. Simply put, it’s just a way of evaluating and comparing two things.

A/B split testing involves creating and sending two different variations of an email to two different sample groups. That could be as simple as changing the subject lines or CTAs, or as advanced as using different email templates or altering the email design.

By monitoring metrics and analyzing test results, you’ll have enough insights to find the variation that generates the most opens and click throughs. Then the winning version can be sent to the rest of the email subscribers. It can also become the basis on which you build future emails.

Why You Need A/B Testing for Email Marketing

Still doubting whether you need to include split tests in your email campaigns?

Here are three benefits to put your mind at ease.

1. Create Winning Results

You know how important it is to your friend’s approval for the outfit you’re planning to wear to a party.

Well, think of A/B testing like that close friend who offers the right suggestions.

Instead of second-guessing which email will or will not be successful, why not rely on hardcore proof?

A/B tests highlight which variant generates the best response. Making small changes to your emails can significantly impact open rates, click throughs, engagement rates, revenue, and, of course, your overall marketing strategy.

2. Get to Know the Target Audience Better

A successful business aligns its email content and products with the needs and preferences of its customers. A/B tests allow you to understand your audience better and discover what’s generating more sales.

Moreover, it can help reach more people, produce more leads, and grow your following. So you don’t want to miss out on this opportunity to make your business flourish.

3. Offers a Competitive Edge

Considering that only 22% of companies are happy with their current conversion rate, there’s much scope for improvement.

A/B testing helps ensure your email marketing strategy is producing optimal results. It'll help you get ahead of your competitors, especially those that aren’t using split testing. It’s your chance to get ahead.

So by not using A/B tests, you aren’t tapping into the potential of your email marketing campaigns.

I could go on with the benefits, but I believe there are more important things to discuss.

So let's start learning how to tweak your emails and make your hard work pay off.

What to A/B Test for Email Marketing – 8 Experiments to Try

Most people don’t know how or what to test, so they simply disregard email A/B testing altogether. If this sounds a lot like you, here are a few email variables you could try testing.

1. Sender Name

The sender name is simply your brand name. You’ll be surprised to learn that some variants of a brand name can drive up open rates.

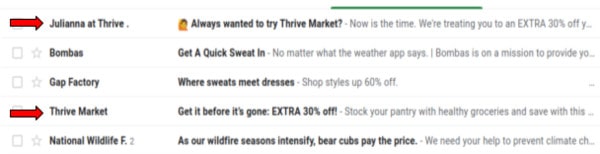

For example, I’ve subscribed to the Thrive Market newsletter. But they occasionally send emails with the following sender name variations:

- Company name

- Team member’s first name + company name

Look at how Thrive Market sends me emails:

But other ideas you can try include:

- Company newsletter

- Company name + department

- Team member’s complete name

- Team member’s complete name + title

I think you get the general idea. However, it’s crucial not to confuse your recipients who sent the email. So, never change the email address.

2. Subject Lines

The first thing your recipients see is the subject line. Even before they read the email content, they've made up their minds whether or not to open it.

So subject lines are one of the most important aspects you should test. A killer subject line can boost opens by almost 15 – 30%.

So how can you make your email subject lines stand out in the crowded inbox where hundreds of other brands are all fighting for the subscriber’s attention?

Here are a few ideas with examples you can try:

- Length (short or long)

Improve your email click rate

vs

The complete email marketing guide to improve your click through rate

- Question or statement

What’s better for your bedroom: cotton or satin curtains?

vs

Cotton curtains for your bedroom

- Word order

Get 15% off on your next purchase with this discount code

vs

Use this discount code to get 15% off on your next purchase

- Negative or positive statement

How not to clean your kitchen countertop

vs

The ideal way to clean your kitchen countertop

- Use of punctuation, emojis, symbols, numbers, etc. or simple sentences

10 ways not to clean your kitchen countertop

vs

How not to clean your kitchen countertop

- Urgent or nonurgent

Offer expires in 24 hours

vs

Avail this offer soon

- Personalized subject line or not

Joe, your order is shipped

vs

Your order has been shipped

- Use of company name or not

Macy’s summer clearance sale is here

vs

The summer clearance sale is here

3. Preheaders

Yes, I know the subject line grabbed your attention, but the preheader is what convinces you to open it. How often have you read that little snippet of text next to the subject line to help you decide whether an email is worth opening?

This preheader convinced me 🙂

When done right, the preheader can become a secondary subject line. So you should use this opportunity to offer additional information to support the subject line and convince subscribers to open emails.

You can A/B test the preheader with different messages. For instance, you can:

- Pull the first line of the email

- Use a call to action

- Enhance the sense of urgency

- Summarize the purpose of the email

4. Email Design

The email's layout must be so appealing that it encourages the reader to complete reading the message or click on the CTA.

Simple ways to A/B test email design are:

- Does plain-text format increase engagement, or will adding a few images make a difference?

- Single column vs two-column?

- What about the placement of different email elements, such as where the CTA button, images, logo, etc., are placed?

5. Email Copy

Once your subscriber opens the email, the body shouldn’t disappoint. How can you ensure the copy reaches its potential? A/B test various aspects, such as:

- Length

- Format

- Bullet points or numbered lists

- Personalization

- Tone and language

- Use of visuals

6. Call To Action

CTAs help convert email recipients, whether to visit your website, contact a company representative, or make a purchase.

Therefore, the CTA button needs to be clearly visible and captivating. So it makes sense to A/B test which one can increase conversions.

- Usage of words or button

- Number of buttons

- Placement of button

- Size and color of the button

- CTA copy

- Size and font of CTA copy

7. Sending Time

Ideally, you want to send an email to people when they are most likely to read and engage with the content. But to do that, you'll have to figure out what is the best day to send the email and the time.

Research suggests that you can increase open rates by 20% if you can nail both these aspects.

Sounds like a plan!

Since this information varies from industry to industry and changes in the target audience's preferences, split testing can make this job a lot easier.

And once you’ve figured out the ideal sending time, you can automate email campaigns by scheduling emails to be sent out to subscribers at these times.

8. Personalization

Personalization helps produce more targeted emails, builds relationships with subscribers, and significantly improves email marketing metrics.

When you think of personalizing an email, the first thing that comes to mind is typically to use the subscriber's name in the subject line or the salutation.

But there are other ways to personalize your email too.

- What content to send to subscribers

Personalization helps you send the right content to the right subscribers. It ensures readers will engage with your email. This is particularly important if you create a newsletter. A/B test what type of content a segment of your email list is likely to read.

- Remind customers about abandoned shopping carts

You can email people who put an item into the shopping cart but didn't complete the purchase. You can show photos of them or offer an incentive such as a discount to motivate them to buy. E-commerce brands can A/B test different promotional offers to see which variant achieves more conversions.

- Offer product recommendations

By tracking a person’s browsing and shopping history, you can suggest items they are more likely to buy. For instance, you could promote something they often look at. And A/B testing can help identify opportunities for you to upsell or cross-sell other items.

How to Set It Up

Now that you have some ideas of what you can test, it’s time for some real action.

Here are some details to help you start A/B testing the right way. It’ll allow you to acquire accurate results to use in your current email marketing strategy.

1. Pick Variables You Want to Test

Make a list of every variable you know is worth testing. In order to evaluate how effective a change can make on your email campaign, isolate one variable and create two versions of the email.

2. Have a Clear Hypothesis

You won’t gain much if you randomly make changes to see what works and what doesn’t. Know what you want to achieve and have a good reason why you’re testing a certain variable.

More importantly, set a level you expect the tests to reach. Choose how significant your results should be, and how sure are you that the variation will yield positive results?

3. Identify Your Goals

Before you run A/B tests to see better email marketing results, you need to have a clear vision of the goals you want to achieve. Know what you’re testing and why.

What are your goals?

Do you want to see an increase in web traffic, boost conversion rates, reduce bounce rates, or lower cart abandonment? Your plan of action depends on your objectives.

4. Select Sample Groups

You’ll need to test your emails to two groups of subscribers. So collect a random sampling of active email addresses and send them both variants.

5. Measure the Results

Measure the performance of the email variations. Monitoring the right metrics will help you discover how successful the change has been.

More importantly, determine whether the results are statistically significant. In other words, are the results enough to justify a change?

6. Analyze the Results

A test that shows that version is better than the other means you’ve found a way to improve your email marketing campaign.

But some tests can be inconclusive, in which case neither version was statistically better. You’ll have to use the original version and run other A/B tests.

7. Repeat

Just because you’ve discovered one way to optimize your email doesn’t mean you can call it a day. It’s time to conduct further tests on other email elements.

A/B Testing Best Practices

Now that you’ve learned how to set up split testing, it’s time to learn how to do it properly. Here are 8 easy tips to ensure you get the best results.

1. Test One Variable at a Time

You should test each variable separately to find which aspect produces the best results. However, it’s imperative to test both versions at the same time.

Take, for example, your email subject line. Since it’s the first point of interaction between you and your subscriber, it needs to be top-notch. So it only makes sense to A/B test the subject line before anything else.

I’ve already mentioned some experiments you can try to yield the best response. Remember, email subject lines that create curiosity help engage your audience better with your brand.

Once you find the subject line which has the greatest impact on the open rate, then A/B test the preheader.

One way to find the winning combination is to run two separate tests. First, test two different subject lines without changing the preheader. Then use the winning subject line with two different preheaders. Combining the winning subject line and preheader can generate optimal results.

But be careful how often you use it. Overuse tends to weaken performance over time.

2. Don’t Overlook Preheader Testing

Since split testing can take time and effort, many marketers focus more on other email tests and overlook the preheader. But considering the vital role it plays in convincing a person to open your email, consider giving it more time.

Use this space to elaborate the subject line, not repeat the same information. Keep in mind that different screens will display different amounts of text. But whether it’s a mobile or laptop, remember there’s only a limited amount of space.

Successful preheader text includes one or two keywords or a recognizable phrase. But don’t worry, split tests can help you find them.

3. Pick a Big Enough Sample Size and Run the Test Long Enough

To get statistically significant results, you want to test a sample size that’s big enough and give recipients enough time to engage with your emails in order to get accurate insights about your target audience.

But I was left wondering how big is “big enough” and how long is “long enough“.

First, let’s consider sample size.

If you have a huge email list, like more than 1000 subscribers, you can use the 80/20 rule. That means 20% of your subscribers will become the participants of your test group – 10% will receive version A while the other 10% will get version B. The variant with the best result is then sent to the remaining 80%.

But if you have a small email list, like 100 subscribers, it’s best to use the 50/50 rule. You’ll end up testing the entire list. Version A is sent to half while the other half gets version B. This allows you to gather enough data on which you can build a winning version for your next email campaign.

As a general rule, aim to have a big test audience to ensure test results are statistically significant and not simply because of randomness.

Secondly, give the test enough time to produce useful data.

The window of time you give test groups to open and engage depends on what you’re testing.

For instance, small changes to a call to action or email subject line can be tested in a shorter time than tests that involve more drastic changes, such as rebranding or layout changes.

But generally, the longer the window of time you set, the better information you’ll get. Because at the end of the day, it’s about using representative samples to gather enough data to validate (or invalidate) a hypothesis.

4. Use a Test Group of Active Subscribers That Are Similar

Before you conduct A/B tests, ensure that both groups consist of active subscribers with similar personas. This ensures that other aspects aren't negatively influencing the test results.

However, if you’re testing re-engagement emails, you’ll obviously be targeting inactive subscribers.

5. Test Email Content Regularly

Test the language and tone of your email copy. For instance, using a positive tone can motivate readers to take action, helping to drive click throughs and conversions.

Furthermore, if you have a blog, you can email multiple pieces of content, such as in a newsletter. You can use blog post headlines in the subject line and monitor which one has more open rates. This not only improves conversions but also allows you to learn what content resonates with your audience.

6. Test for Responsive Design

81% of people prefer to open emails on their smartphones. So testing responsive design is crucial. Common aspects to consider are font and CTA size. When emails are easier to read on the go and CTAs are finger-friendly, you’ll find a significant improvement in your click through rate.

7. Use A/B Testing Tools

Ah, what would we be without these handy email marketing tools? Pick the right one for your testing needs and budget. Some popular ones include:

8. Go Beyond Simple Split Testing

While it makes sense to start off slow, you should also consider bandit testing and multivariate testing.

With regular A/B tests, you can identify and send the winning version. But bandit testing allows you to find the right audience for the losing variation.

On the other hand, multivariate testing allows you to test multiple variables at one time rather than just one.

A/B Testing Mistakes to Avoid

Split testing isn’t too difficult to set up, especially with the availability of easy-to-use tools on the market.

However, there are some glaring mistakes that marketers often make. The biggest mistake is simply not performing A/B testing.

But besides this, there are other ways that you could be wasting time and resources during testing. To ensure this doesn’t happen to you, here are mistakes you should avoid:

1. Concentrating On Trivial Details First

Sure, you’ll need to test various details. However, start with those that can significantly impact your email campaigns first.

For instance, testing the color of the CTA button is not as important as the wording of the subject line. Focussing on more notable aspects first can yield impressive results more quickly.

2. Test Far Enough Down the Funnel

It’s great that you’re looking into variations such as which subject lines drive more opens and which email content receives more clicks.

But don’t limit yourself to only these metrics.

At the end of the day, you need a variation that generates more conversions. That is the key metric that highlights the success of your email campaign.

3. Calling Tests Too Early

It happens all the time – you run a bunch of split tests, declare a winner, and roll it out. But the conversion rate stays the same.

Why?

Because the tests were called too early (or the sample size was too small). To avoid this from happening, don’t start testing until you have a sufficient sample size, and don’t stop until:

Complete multiple sales cycles (approximately 2 – 4 weeks each). If you stop too soon, even with a good sample size, you haven’t collected a representative sample.

Statistical significance must be about 95%.

4. Not Accepting the Truth

It’s easy to become attached to your hypothesis. And it hurts when things don’t work out as you thought.

But being adamant and rejecting failure can cause a great deal of harm to your email campaign.

So accepting the truth is more important than declaring a winner.

5. Not Digging Deeper Into Test Data

When interpreting test results, you need to look closely at the other details as well, such as where the recipient stands in the sales funnel, location, etc. Otherwise, you could end up misinterpreting signals and apply the wrong findings to your email campaigns.

So what you aim to do is test the data using different test groups. With the help of segmented analytics, you can understand why some variants perform better with certain groups.

6. Ignoring Small Improvements

Don’t be disappointed with small gains. In fact, sometimes winning tests beat the control by small amounts, maybe as low as 1%.

But, depending on the absolute numbers, even a 1% gain can add up to thousands in revenue in one month. And the more important thing is that if you look at the bigger picture, this small gain can increase vastly over the course of a year.

So don’t be afraid to implement something because it seems nominal.

It’s Time to Test Your Email Campaigns

Email marketers are always searching for techniques that can increase revenue and ROI.

As you’ve learned by now, A/B testing can help.

Like everything else in digital marketing, the only way to know what works and what doesn't is to simply go ahead and test it. Knowing whether your emails are on the mark can help you improve your marketing campaigns, and ultimately your bottom line.

But split testing is not something you can once and tick off your to-do list. You’ll have to repeat it as your audience evolves and the business grows. Moreover, the variables you test may change from time to time.

As you put in time and effort to thoughtfully and strategically test many things, you’ll discover little improvements that can optimize your emails and, in the process, create a stunning winner!

And speaking from experience, I recommend you consider taking A/B testing into other areas as well, such as website layout, landing pages, content marketing strategies, and beyond.

But I’ll leave these topics for another day.

In the meantime, let’s discover how to take your email marketing campaigns to the next level.